Module System Dependency Injection in React & Friends

What does 'use the module system for global dependency injection' actually mean, and is it a good substitute for real DI?

While the term conjures up legacy-decorator-wielding, auto-wiring frameworks like TSyringe, most of the time dependency injection is as simple as either accepting your dependencies as arguments/props, or leveraging some kind of context primitive to inherit the value without passing it in directly (think React.Context, AsyncLocalStorage or something similar).

Avoiding baking in dependencies gives us a huge amount of flexibility, including:

- Swapping out the implementation of an interface depending on how a component is supposed to be used. See this excellent refresher on the value of composition in React.

- Allowing components to be more easily tested, since out-of-process dependencies can be replaced with test doubles.

- Allowing different environments (e.g. local, production) to behave differently.

- Changing the implementation of something based on an external runtime variable, such as a feature flag.

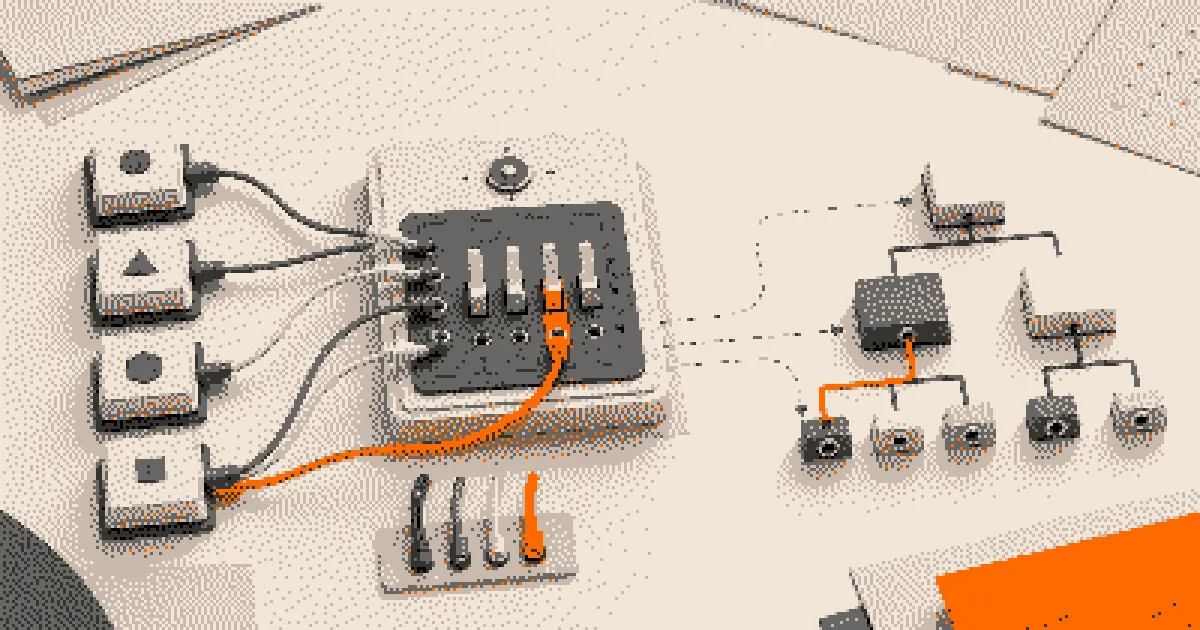

In this sense we can break DI down into two flavors:

- Static DI: Dependencies are chosen based on static configuration (such as environment variables), wired up once and fixed for the lifetime of the program. Even if technically these are controlled by a runtime value, the wiring happens once.

- Runtime DI: Dependencies vary based on runtime data. A dependency could be dynamically selected based on tenant, user, or some other external factor. In the case of React components, it’s usually their parent

React.Context. This could also take the form of a service-locator, where dependencies are static (i.e. everyone gets the same one), but can still be hot-swapped while running.

Testing a module that uses an out-of-process dependency is as simple as passing a test-double which conforms to the interface specified by the consumer. Same with swapping out the implementation.

The meta-frameworks

React meta-frameworks, however, rarely have such a clean entry point. React.Context is still king for DI in the component tree (server components notwithstanding), but what about the rest?

Routes, actions, and loaders are abstracted away by the bundler. In the case of Tanstack Start and React Router, we do at least have the concept of middleware and ‘server context’, which means we have an opportunity to inject/override dependencies.

You can even do this kind of thing in React Router:

import { userContext } from "~/context";

import { UserPanel } from "./UserPanel.server";

import type { Route } from "./+types/root";

export async function loader({ context }: Route.LoaderArgs) {

// Context can be set by middleware, and overridden.

const user = context.get(userContext);

return <UserPanel user={user} />;

}TSXA version of Next.js server context is possible but cursed, and despite sharing a name would behave quite differently.

Instead, a recurring theme I hear in the Next.js community is:

Use the module system for global dependency injection.

This is a third, more strict form of DI: ‘compile-time’ dependency injection.

Using the module system

You’ve probably seen Node’s conditional exports, but you might not have seen subpath imports:

// package.json

{

"imports": {

"#analytics": {

"analytics-noop": "./analytics-noop.js",

"analytics-logger": "./analytics-logger.js",

"default": "./analytics-logger.js"

}

}

}JSONThey’re great for native alias support (no more syncing TypeScript ~ aliases across build systems and test runners), but they also provide an opportunity for flag-based dependency injection:

# Will use `analytics-noop.js`

NODE_OPTIONS="--conditions=analytics-noop" npm run dev

# Will use `analytics-logger.js`

NODE_OPTIONS="--conditions=analytics-logger" npm run dev

# Since it's the default, will also use `analytics-logger.js`

npm run devBashThis is the idea people are usually pointing at when they say ‘use the module system’: don’t pass an object through context; instead arrange for imports to resolve to the desired implementation.

Turbopack (unfortunately) has its own module resolution setup, but with a little effort you can force it to respect native import conditions:

// next.config.ts

import { createRequire } from "node:module";

import path from "node:path";

import type { NextConfig } from "next";

const projectRoot = process.cwd();

// Next transpiles next.config.ts to CJS via SWC, so let's not try to rely on `import.meta.resolve`.

const localRequire = createRequire(path.join(projectRoot, "package.json"));

/**

* Resolves a local module path relative to the project root.

* Works around the fact that Turbopack doesn't support Node.js conditional imports.

*/

function resolveLocal(specifier: string) {

const resolved = localRequire.resolve(specifier);

return `./${path.relative(projectRoot, resolved)}`;

}

const nextConfig: NextConfig = {

turbopack: {

// Resolve custom aliases:

// https://nextjs.org/docs/app/api-reference/config/next-config-js/turbopack#resolving-aliases

resolveAlias: {

"#analytics": resolveLocal("#analytics"),

},

},

};

export default nextConfig;TypeScriptWhat’s the catch?

At first this might seem appealing, since we can be sure we’re not loading unnecessary cruft. If we have a development version of a dependency, then we don’t even have to pay the transpilation cost.

This is in contrast to a switch/case or map, where the best we could hope for is a dynamic import:

export const { analyticsClient } = process.env.ANALYTICS_STRATEGY === 'noop'

? await import('./analytics-noop')

: await import('./analytics-logger');TypeScriptHowever, with compile-time DI we’ve lost the ability to override dependencies inline, meaning that we can only ever have one version at a given time.

If you want to test a module, you can’t just pass an inline stub dependency to it; instead you’d need to rely on module-level mocking/spies, which I’m dead against.

describe("AnalyticsOnLoad", () => {

test("Calls the analytics client with the name of the page", async () => {

const analyticsClient = { sendEvent: vi.fn() };

// How do we pass our inline stub client down

// if we're swapping deps using the module system?

await serverAction();

expect(analyticsClient.sendEvent).toHaveBeenCalledWith('Home');

});

});TypeScriptSimilarly, if you want to have different versions of the same dependency elsewhere in the same app, you’re out of luck. For some use-cases you might be able to introduce indirection just for the sake of testing:

import { analyticsClient } from "#analytics"

type Client = { sendEvent: (name: string) => void }

// Export a testable version.

export function createServerAction(client: Client) {

return function action() {

// Implementation

};

}

export const action = createServerAction(analyticsClient);TypeScriptThe module system also can’t enforce a type contract by itself. You have to create and maintain that contract deliberately, weakening type-safety.

All this is to say: using the module system may work for certain scenarios, but it’s not a substitute for providing a way to just pass your dependencies in.

Poor-man’s DI

My preferred compromise is to treat module-system DI as a static composition-root tool, not as the application’s DI system.

Keep business logic behind functions or factories that accept an abstraction/interface wherever possible, facilitating unit and integration tests. For E2E tests, leverage compile-time DI to swap out predictable pieces of your infrastructure, such as feature-flag services or analytics clients.

That way the production app gets static module substitution, tests get plain inline stubs, and request/subtree-specific behavior still has an explicit path via props, React context, router context, or request-derived factories.

The module system is a good place to choose a default, but it’s a poor place to hide every dependency decision.